AI-Generated Character Dialogue in Games

AI is transforming how game characters interact with players. This article explains how AI powers dynamic NPC dialogue, highlights top tools like Inworld AI, GPT-4, and Convai, and explores real-world game examples using generative conversation.

Video games have traditionally relied on pre-scripted dialogue trees, where NPCs (non-player characters) deliver fixed lines in response to player actions. Today, AI-driven dialogue uses machine learning models—particularly large language models (LLMs)—to dynamically generate character responses. As the Associated Press reports, studios are now "experimenting with generative AI to help craft NPC dialogue" and create worlds "more responsive" to player creativity.

In practice, this means NPCs can remember past interactions, respond with novel lines, and engage in free-form conversations instead of repeating canned responses. Game studios and researchers note that LLMs' strong contextual understanding produces "natural-sounding responses" that can replace traditional dialogue scripts.

Why AI Dialogue Matters

Immersion & Replayability

NPCs gain lifelike personalities with depth and dynamism, creating richer conversations and stronger player engagement.

Contextual Awareness

Characters remember past encounters and adapt to player choices, making worlds feel more responsive and alive.

Emergent Gameplay

Players can interact in freeform ways, driving emergent stories instead of following predetermined quest paths.

AI as a Creative Tool, Not a Replacement

AI-powered dialogue is designed to assist developers, not replace human creativity. Ubisoft emphasizes that writers and artists still define each character's core identity.

Developers "shape [an NPC's] character, backstory, and conversation style," and then use AI "only if it has value for them" – AI "must not replace" human creativity.

— Ubisoft, NEO NPC Project

In Ubisoft's prototype "NEO NPC" project, designers first craft an NPC's backstory and voice, then guide the AI to follow that character. Generative tools function as "co-pilots" for narrative, helping writers explore ideas quickly and efficiently.

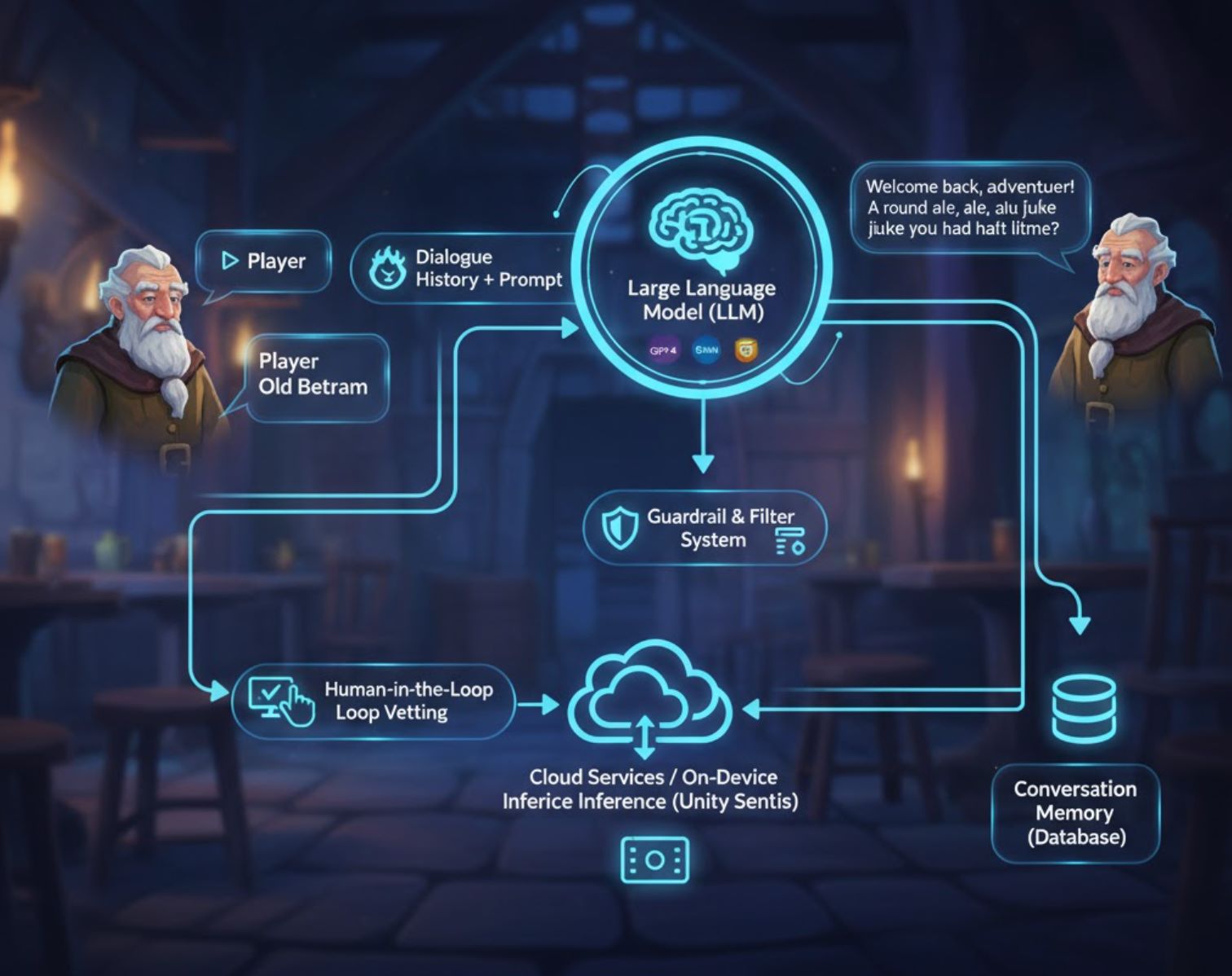

How AI Dialogue Systems Work

Most AI dialogue systems use large language models (LLMs) like GPT-4, Google Gemini, or Claude—neural networks trained on vast text data to generate coherent responses.

Character Definition

Developers provide a prompt describing the NPC's personality and context (e.g., "You are an old tavern keeper named Old Bertram, who speaks kindly and remembers the player's previous orders")

Real-Time Generation

When a player talks to an AI-NPC, the game sends the prompt and dialogue history to the language model via API

Response Delivery

The AI returns a dialogue line, which the game displays or voices in real-time or near-real-time

Memory Retention

Conversation logs are stored so the AI knows what was said earlier and maintains coherence across sessions

Safeguards & Quality Control

Teams build in multiple safeguards to maintain character consistency and prevent inappropriate responses:

- Guardrail systems and toxicity filters keep NPCs in character

- Human-in-the-loop iteration: if an NPC "answered as the character we had in mind," developers keep it; otherwise, they tweak model prompts

- High-quality prompts ensure high-quality dialogue ("garbage in, garbage out")

- Cloud services or on-device inference (e.g., Unity Sentis) optimize performance and reduce latency

Benefits and Challenges

Advantages for Developers & Players

- Time savings: Draft conversations quickly instead of writing every line by hand

- Creative brainstorming: Use AI as a starting point to explore new dialogue directions

- Scalability: Generate long chat sessions and personalized story branches

- Player engagement: NPCs that remember past encounters feel more alive and adaptive

- Emergent storytelling: Players can drive freeform interactions in sandbox or multiplayer games

Pitfalls to Manage

- Meaningless chat: Unlimited, random dialogue is "just endless noise" and breaks immersion

- Hallucination: AI can generate off-topic lines unless carefully constrained with context

- Computational cost: LLM API calls add up at scale; usage fees can strain budgets

- Ethical concerns: Voice actors and writers worry about job displacement

- Transparency: Some consider disclosing AI-written lines to players

Tools & Platforms for AI Dialogue in Games

Game creators have many options for AI dialogue. Here are some notable tools and technologies:

Inworld AI

Application Information

| Developer | Inworld AI, Inc. |

| Supported Platforms |

|

| Language Support | Primarily English; multilingual voice generation and localization features in development. |

| Pricing Model | Freemium: free credits with pay-as-you-go usage for LLM dialogue and text-to-speech. |

Overview

Inworld AI is a generative AI platform designed to create highly realistic, emotionally intelligent non-player characters (NPCs) for games. By combining memory, goals, personality, and voice synthesis, it enables dynamic, context-aware conversations that evolve based on player behavior and world state. Game developers can build AI-driven characters using visual tools, then integrate them with game engines like Unreal or via API.

Key Features

Characters with memory, goals, and emotional dynamics that respond naturally to player interactions.

No-code, graph-based Studio interface to define personality, knowledge, relationships, and dialogue style.

Low-latency TTS with built-in voice archetypes tailored for gaming and emotional nuance.

NPCs recall past interactions and evolve relationships with players over time.

Filter character knowledge and moderate responses to ensure realistic and safe NPC behavior.

SDKs and plugins for Unreal Engine, Unity (early access), and Node.js agent templates.

Download or Access

Getting Started

Sign up for an Inworld Studio account on the Inworld website to access the character builder.

Use Studio to define persona, memory, emotional graphs, and knowledge base for your NPC.

Download the Unreal Runtime SDK or Unity plugin, then import character template components into your project.

Set up player input (speech or text), connect to the dialogue graph, and map output to text-to-speech and lip-sync.

Define what your NPC knows and how its knowledge evolves in response to player actions over time.

Prototype interactions in Studio, review generated dialogue, tune character goals and emotional weights, then re-deploy.

Use the API or integrated SDK to launch your AI-driven NPCs in your game or interactive experience.

Important Considerations

Configuration & Optimization

- Memory tuning and safety filtering require careful configuration to prevent unrealistic or unsafe NPC responses

- Voice localization is expanding but not all languages are currently available

- Test character behavior thoroughly before production deployment to ensure quality interactions

Frequently Asked Questions

Yes, Inworld Studio provides a no-code, graph-based interface to design character personality, dialogue, and behavior without programming knowledge.

Yes, Inworld includes an expressive text-to-speech API with gaming-optimized voices and built-in character archetypes. TTS is integrated into the Inworld Engine.

Inworld uses usage-based pricing: you pay per million characters for text-to-speech and compute costs for LLM dialogue generation. Free credits are available to get started.

Yes, Inworld supports long-term memory, allowing NPCs to recall past interactions and maintain evolving relationships with players across multiple sessions.

Yes, the Inworld AI NPC Engine plugin is available on the Epic Games Marketplace for Unreal Engine integration.

HammerAI

Application Information

| Developer | HammerAI (solo-developer / small team) |

| Supported Platforms |

|

| Language Support | Primarily English; character creation supports various styles without geographic limitations |

| Pricing Model | Free tier with unlimited conversations and characters; paid plans (Starter, Advanced, Ultimate) offer expanded context size and advanced features |

Overview

HammerAI is a powerful AI platform designed for creating realistic, expressive character dialogue. It empowers writers, game developers, and role-players to interact with AI-driven personas through intuitive chat, enabling them to build rich lore, backstories, and immersive conversations. The platform supports both local language models and cloud-hosted options, providing flexibility between privacy and scalability.

Key Features

Free tier supports unlimited chats and character creation without restrictions.

Run powerful LLMs locally via desktop for privacy or use cloud-hosted models for convenience.

Build detailed lore, backstories, and character settings to enrich dialogue and maintain consistency.

Specialized mode for writing dialogues for game cutscenes and interactive narrative sequences.

Desktop app supports image generation during chats using built-in models like Flux.

Invite up to 10 characters in a single group chat for complex multi-character interactions.

Detailed Introduction

HammerAI provides a unique environment for creating and conversing with AI characters. Through the desktop application, users can run language models locally on their own hardware using ollama or llama.cpp, ensuring privacy and offline functionality. For those preferring cloud-based solutions, HammerAI offers secure remote hosting for unlimited AI chat without requiring an account.

The character system supports lorebooks, personal backstories, and dialogue style tuning, making it ideal for narrative development in games, scripts, and interactive fiction. The platform includes specialized tools for cutscene dialogue generation, enabling rapid creation of cinematic and game-story sequences with proper formatting for spoken dialogue, thoughts, and narration.

Download or Access

Getting Started Guide

Get HammerAI from its itch.io page for Windows, macOS, or Linux.

Use the "Models" tab in the desktop app to download language models like Mistral-Nemo or Smart Lemon Cookie.

Pick from existing AI character cards or create your own custom character via Author Mode.

Enter dialogue or actions using normal text for speech or italics for narration and thoughts.

Click "Regenerate" if unsatisfied with the AI's reply, or edit your input to guide better responses.

Create and store character backstories and world lore to maintain consistent context throughout conversations.

Switch to cutscene dialogue mode to write cinematic or interactive narrative exchanges for games and stories.

Limitations & Important Notes

- Offline use requires downloading character and model files in advance

- Cloud models limited to 4,096 token context on free plan; higher-tier plans offer expanded context

- Chats and characters stored locally; cross-device sync unavailable due to lack of login system

- Cloud-hosted models use content filters; local models are less restricted

- Local model performance depends on available RAM and GPU resources

Frequently Asked Questions

Yes — HammerAI offers a free tier that supports unlimited conversations and character creation. Paid plans (Starter, Advanced, Ultimate) provide expanded context size and additional features for advanced users.

Yes, via the desktop app running local language models. You must download character and model files in advance to enable offline functionality.

Yes — the desktop app supports image generation during chat using built-in models like Flux, allowing you to create visual content alongside your conversations.

Use the lorebook feature to build and manage character backstories, personality traits, and world knowledge. This ensures consistent context throughout your conversations.

You can regenerate the response, edit your inputs to provide better guidance, or adjust your roleplay prompts to guide the AI toward better output quality.

Large Language Models (LLMs)

Application Information

| Developer | Multiple providers: OpenAI (GPT series), Meta (LLaMA), Anthropic (Claude), and others |

| Supported Platforms |

|

| Language Support | Primarily English; multilingual support varies by model (Spanish, French, Chinese, and more available) |

| Pricing Model | Freemium or paid; free tiers available for some APIs, while larger models or high-volume usage require subscription or pay-as-you-go plans |

Overview

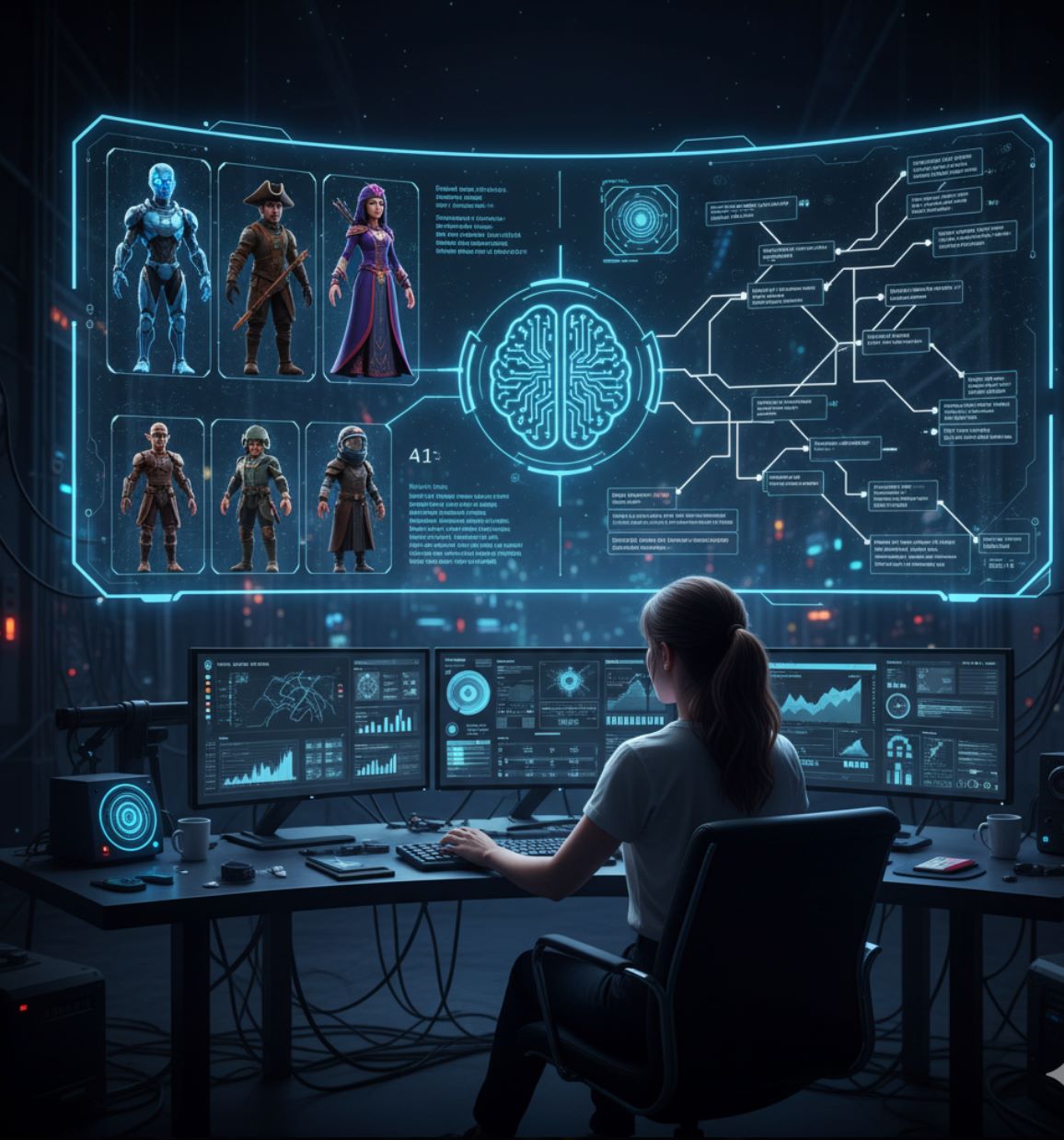

Large Language Models (LLMs) are advanced AI systems that generate coherent, context-aware text for dynamic gaming experiences. In game development, LLMs power intelligent NPCs with real-time dialogue, adaptive storytelling, and interactive roleplay. Unlike static scripts, LLM-powered characters respond to player input, maintain conversation memory, and create unique narrative experiences that evolve with player choices.

How LLMs Work in Games

LLMs analyze vast amounts of text data to predict and generate natural language outputs tailored to game contexts. Developers use prompt engineering and fine-tuning to shape NPC responses while maintaining story coherence. Advanced techniques like retrieval-augmented generation (RAG) enable characters to remember previous interactions and lore, creating believable, immersive NPCs for role-playing, adventure, and narrative-driven games.

Creates context-sensitive NPC conversations in real time, responding naturally to player input.

Generates quests, events, and narrative branches that adapt to game state and player decisions.

Maintains character consistency using defined backstories, goals, and personality traits.

Recalls prior interactions and game world facts for coherent multi-turn dialogue and persistent character knowledge.

Download or Access

Getting Started

Choose a model (OpenAI GPT, Meta LLaMA, Anthropic Claude) that matches your game's requirements and performance needs.

Use cloud APIs for convenience or set up local instances on compatible hardware for greater control and privacy.

Create detailed NPC backstories, personality traits, and knowledge databases to guide LLM responses.

Craft prompts that guide LLM responses according to game context, player input, and narrative goals.

Connect LLM outputs to your game's dialogue systems using SDKs, APIs, or custom middleware solutions.

Evaluate NPC dialogue quality, refine prompts, and adjust memory handling to ensure consistency and immersion.

Important Considerations

- Hallucinations: LLMs can produce incoherent or factually incorrect dialogue if prompts are ambiguous; use clear, specific instructions

- Hardware & Latency: Real-time integration requires powerful hardware or cloud infrastructure for responsive gameplay

- Ethical & Bias Risks: LLM outputs may include unintended biases; implement moderation and careful prompt design

- Subscription Costs: High-volume or fine-tuned models typically require paid API access

Frequently Asked Questions

Yes. With proper persona design, memory integration, and prompt engineering, LLMs can maintain character consistency across multiple interactions and conversations.

Yes, though performance depends on hardware or cloud latency. Smaller local models may be preferred for real-time responsiveness, while cloud APIs work well for turn-based or asynchronous gameplay.

Many models support multilingual dialogue, but quality varies depending on the language and specific model. Test thoroughly for your target languages.

Implement moderation filters, constrain prompts with clear guidelines, and use safety layers provided by the model platform. Regular testing and community feedback help identify and address issues.

Some free tiers exist for basic usage, but larger context models or high-volume scenarios generally require subscription or pay-as-you-go plans. Evaluate costs based on your game's scale and player base.

Convai

Application Information

| Developer | Convai Technologies Inc. |

| Supported Platforms |

|

| Language Support | 65+ languages supported globally via web-based and engine integrations. |

| Pricing Model | Free access to Convai Playground; enterprise and large-scale deployments require paid plans or licensing contact. |

What is Convai?

Convai is a conversational AI platform that empowers developers to create highly interactive, embodied AI characters (NPCs) for games, XR worlds, and virtual experiences. These intelligent agents perceive their environment, listen and speak naturally, and respond in real time. With seamless integrations into Unity, Unreal Engine, and web environments, Convai brings lifelike virtual humans to life, adding immersive narrative depth and realistic dialogue to interactive worlds.

Key Features

NPCs respond intelligently to voice, text, and environmental stimuli for dynamic interactions.

Low-latency voice-based chat with AI characters for natural, immersive dialogue.

Upload documents and lore to shape character knowledge and maintain consistent, context-aware conversations.

Graph-based tools to define triggers, objectives, and dialogue flows while maintaining flexible, open-ended interactions.

Native Unity SDK and Unreal Engine plugin for seamless AI NPC embedding into your projects.

Enable AI characters to converse autonomously with each other in shared scenes for dynamic storytelling.

Download or Access

Getting Started Guide

Create your Convai account via their website to access the Playground and start building AI characters.

In the Playground, define your character's personality, backstory, knowledge base, and voice settings to bring them to life.

Use Convai's Narrative Design graph to establish triggers, decision points, and objectives that guide character behavior.

Unity: Download the Convai Unity SDK from the Asset Store, import it, and configure your API key.

Unreal Engine: Install the Convai Unreal Engine plugin (Beta) to enable voice, perception, and real-time conversations.

Activate Convai's NPC2NPC system to allow AI characters to converse autonomously with each other.

Playtest your scenes thoroughly, refine machine-learning parameters, dialogue triggers, and character behaviors based on feedback.

Important Limitations & Considerations

- Character avatars created in Convai's web tools may require external models for game engine export.

- Managing narrative flow across multiple AI agents requires careful design and planning.

- Real-time voice conversations may experience latency depending on backend performance and network conditions.

- Complex or high-scale deployments typically require enterprise-level licensing; free-tier access is primarily through the Playground.

Frequently Asked Questions

Yes — Convai supports NPC-to-NPC conversations through its NPC2NPC feature in both Unity and Unreal Engine, enabling autonomous character interactions.

Basic character creation is no-code via the Playground, but integrating with game engines (Unity, Unreal) requires development skills and technical knowledge.

Yes — you can define a knowledge base and memory system for each character, ensuring consistent, context-aware dialogue throughout interactions.

Yes — real-time voice-based conversations are fully supported, including speech-to-text and text-to-speech capabilities for natural interactions.

Yes — Convai offers enterprise options including on-premises deployment and security compliance certifications such as ISO 27001 for commercial and large-scale projects.

Nvidia ACE

Application Information

| Developer | NVIDIA Corporation |

| Supported Platforms |

|

| Language Support | Multiple languages for text and speech; globally available to developers |

| Pricing Model | Enterprise/developer access through NVIDIA program; commercial licensing required |

What is NVIDIA ACE?

NVIDIA ACE (Avatar Cloud Engine) is a generative AI platform that empowers developers to create intelligent, lifelike NPCs for games and virtual worlds. It combines advanced language models, speech recognition, voice synthesis, and real-time facial animation to deliver natural, interactive dialogues and autonomous character behavior. By integrating ACE, developers can build NPCs that respond contextually, converse naturally, and exhibit personality-driven behaviors, significantly enhancing immersion in gaming experiences.

How It Works

NVIDIA ACE leverages a suite of specialized AI components working in concert:

- NeMo — Advanced language understanding and dialogue modeling

- Riva — Real-time speech-to-text and text-to-speech conversion

- Audio2Face — Real-time facial animation, lip-sync, and emotional expressions

NPCs powered by ACE perceive audio and visual cues, plan actions autonomously, and interact with players through realistic dialogue and expressions. Developers can fine-tune NPC personalities, memories, and conversational context to create consistent, immersive interactions. The platform supports integration into popular game engines and cloud deployment, enabling scalable AI character implementations for complex gaming scenarios.

Key Features

Fine-tune NPC dialogue with character backstories, personalities, and conversational context.

Speech-to-text and text-to-speech powered by NVIDIA Riva for natural voice interactions.

Real-time facial expressions and lip-sync using Audio2Face in NVIDIA Omniverse.

NPCs perceive audio and visual inputs, act autonomously, and make intelligent decisions.

Cloud or on-device deployment via flexible SDK for scalable, efficient integration.

Get Started

Installation & Setup Guide

Sign up for NVIDIA Developer program to obtain ACE SDK, API credentials, and documentation.

Ensure you have an NVIDIA GPU (RTX series recommended) or cloud instance provisioned for real-time AI inference and processing.

Set up and configure the three core components:

- NeMo — Deploy for dialogue modeling and language understanding

- Riva — Configure for speech-to-text and text-to-speech services

- Audio2Face — Enable for real-time facial animation and expressions

Configure personality traits, memory systems, behavior parameters, and conversational guardrails for each NPC character.

Connect ACE components to Unity, Unreal Engine, or your custom game engine to enable NPC interactions within your game world.

Evaluate dialogue quality, animation smoothness, and response latency. Fine-tune AI parameters and hardware allocation for optimal gameplay experience.

Important Considerations

Frequently Asked Questions

Yes. NVIDIA Riva provides real-time speech-to-text and text-to-speech capabilities, enabling NPCs to carry on natural, voice-based conversations with players.

Yes. Audio2Face provides real-time facial animation, lip-sync, and emotional expressions, making NPCs visually expressive and emotionally engaging.

Yes. With RTX GPUs or optimized cloud deployment, ACE supports low-latency interactions suitable for real-time gaming scenarios.

Yes. Engine integration and multi-component setup require solid programming knowledge and experience with game development frameworks.

No. Access is available through NVIDIA's developer program. Enterprise licensing or subscription is required for commercial use.

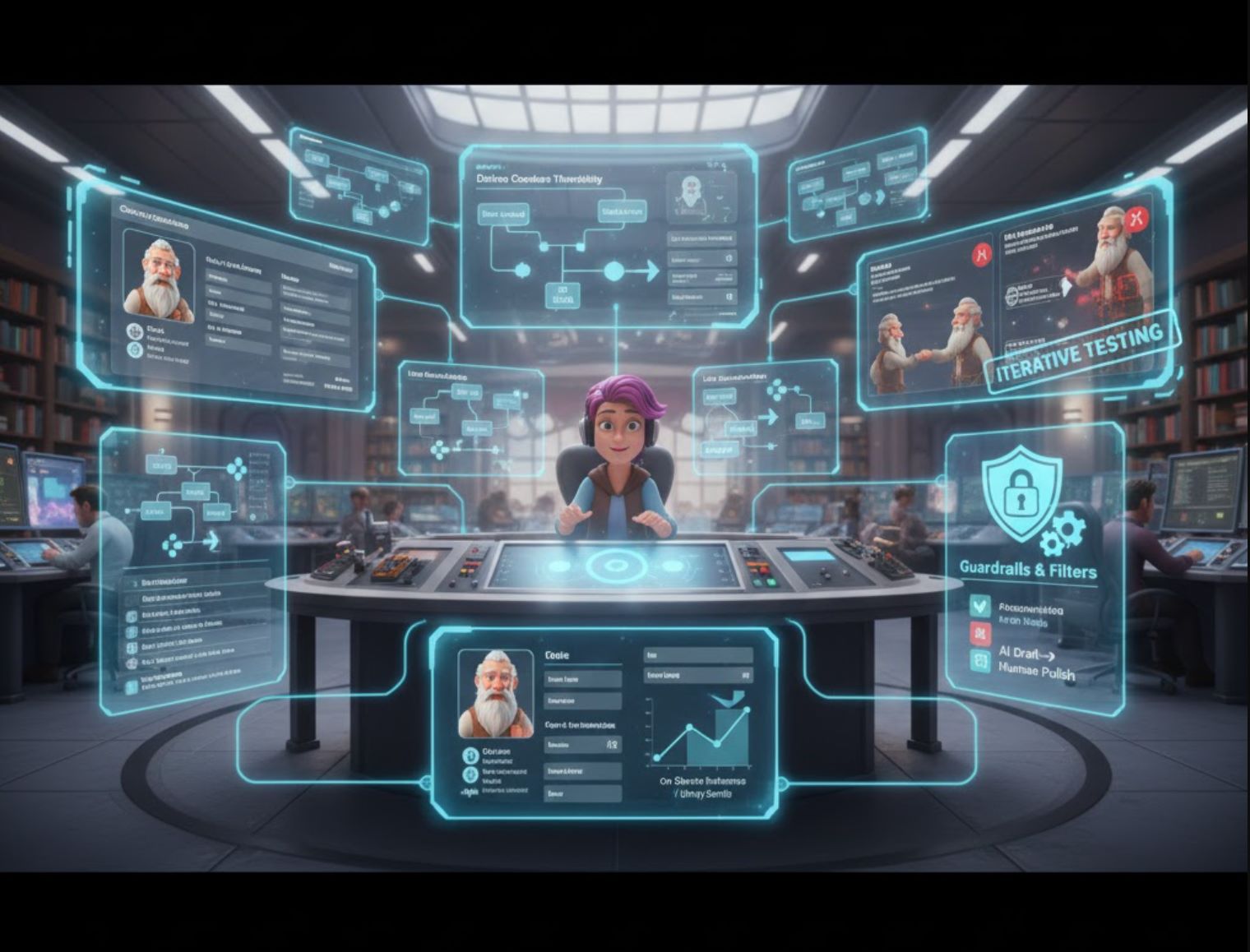

Best Practices for Developers

Define Characters Thoroughly

Write a clear backstory and style for each NPC. Use this as the AI's "system prompt" so it knows how to speak. Ubisoft's experiment made writers craft detailed character notes before involving AI.

Maintain Context

Include relevant game context in each prompt. Pass the player's recent chat and any key game events (quests done, relationships) so the AI's reply stays on topic. Many systems store conversation history to simulate memory.

Use Guardrails

Add filters and constraints. Set word lists for the AI to avoid, or program triggers for special dialogue trees. Ubisoft used guardrails so the NPC never strays from its personality.

Test Iteratively

Playtest chats and refine prompts. If an NPC response feels out of character, tweak the input or add example dialogues. If the answer isn't truly your character, go back and find out what happened in the model.

Manage Cost and Performance

Balance AI use strategically. You don't need AI for every throwaway line. Consider pre-generating common responses or combining AI with traditional dialogue trees. Unity's Sentis engine can run optimized models on device to reduce server calls.

Blend AI with Hands-On Writing

Remember that human writers should curate AI output. Use AI as inspiration, not a final voice. The narrative arc must come from humans. Many teams use AI to draft or expand dialogues, then review and polish the results.

The Future of Game Dialogue

AI is ushering in a new era of video game dialogue. From indie mods to AAA R&D labs, developers are applying generative models to make NPCs talk, react, and remember like never before. Official initiatives like Microsoft's Project Explora and Ubisoft's NEO NPC show the industry embracing this technology—always with an eye on ethics and writer oversight.

Today's tools (GPT-4, Inworld AI, Convai, Unity assets, and others) give creators the power to prototype rich dialogue quickly. In the future, we may see fully procedural narratives and personalized stories generated on the fly. For now, AI dialogue means more creative flexibility and immersion, as long as we use it responsibly alongside human artistry.

No comments yet. Be the first to comment!